Turns out I was asked to write a blog post about the Software Development Lifecycle (SDLC), with no further guidance given (well, that’s kinda nebulous - so here are some musings!).

As background, I’ve been in the industry professionally (i.e. earning a crust) since 1992, after completing a computing and business degree. I’ve worked for HP, IBM and various smaller software development companies providing business process improvements through technology.

I’ve seen many different methodologies over the years, from the super-rigid Structured Software Analysis and Design Method (SSADM) at university through various RAD (Rapid Application Development) methods in the 90s, and on to “Agile” in the 2000s.

The truth is most of these approaches to software development have a lot more in common than one might suppose (developing software involves an awful lot of common sense, but we codify this common sense so every stakeholder is on the same page and we don’t accidentally miss important things when under pressure to deliver).

What I’m going to talk about non-exhaustively in this post is “what is an SDLC?”, and what are some common components of the methodologies that support delivering products in such a cycle.

So what is a Software Development Lifecycle?

The SDLC is the cycle of activities that need to be performed to take a system (infrastructure, software, personnel, procedures) from an initial idea to a working product in production usage with ongoing support. The cycle often repeats with new features being added (or just simple maintenance to extend system life and address defects and vulnerabilities) until system demise.

Fundamentally the SDLC covers everything from the conception to the eventual death of your system (which might be many years away or very soon depending on what problem you happen to be solving).

A bit of history on Software Development Lifecycles - where did we start?

In the early days of systems development, we typically built Online Transaction Processing (OLTP) systems that ran on mainframes. These systems had relatively primitive developer tooling and were quite inflexible with regards to making changes to requirements once development had commenced.

Any user interfaces were relatively primitive and often fairly tightly constrained by the platform - often just a dumb terminal.

These constraints inevitably led to the waterfall approach to systems development. The problem was analysed in full, and the required system was specified in great detail. Once the design was signed off, the development team would build the system. Each component or module would be unit tested and then finally the system would be rolled into an acceptance test environment. If this testing demonstrated that it met the specification, it would be deployed into production. Changes would then follow a similar rigid cycle (and might not happen very frequently). These cycles from initial idea through specification and into delivery often spanned significant timescales.

This approach worked reasonably well for OLTP systems, where a set of well-defined inputs were being transformed into a set of equally well-defined outputs, often with little user interaction. By getting the specification “correct” at the start, expensive re-work was avoided (remembering minimal tooling to help, coupled with expensive compute-time).

Of course, there was one hitch - and that was that the process was very dependent on the quality and accuracy of the initial analysis and design work (and that the requirement hadn’t evolved since that work had been performed).

Users had little familiarity with computer systems, so knowing what was possible and expressing what they wanted were difficult.

This made for a significant burden on the Systems Analyst in terms of both sharing the possible and eliciting the requirements. Users often didn’t get to experience the system they had commissioned until it was already built.

At that point it was too late (expensive) to make significant changes if the system didn’t really meet their needs. That left a number of options (a) use a system that wasn’t really fit for purpose, (b) go back and fix the design and engage in expensive rework, or (c) consider the project a failure. Failures and rework made systems development expensive and often meant once in place, systems lived longer than they possibly should (“don’t mess with something that ain’t broke”).

Client/server computing in the early 1990s

The early 1990s saw a change in the types of system we were developing, with the short-lived revolution of client/server computing.

During this period, we shifted to having very rich thick clients (generally Windows applications built using Fourth Generation Languages (4GLs), such as Visual Basic or PowerBuilder connecting to back end services on various UNIX platforms, often using databases such as ORACLE, DB2 and Sybase.

This shift to a richer client experience meant that users needed a lot more input into the design of the systems they would be using. The new tooling (often with drag and drop for the UI) also meant that it was possible to mock up a User Interface quickly to show the user what they would get and get quick feedback, which would then allow refinement.

Rapid Application Development was born of these new capabilities. It was common to prototype in Visual Basic, even if the final product was being built in C++ or another more capable 4GL.

There were several popular RAD methods, such as Rational Unified Process (RUP) and Dynamic Systems Development Method (DSDM). They all had a focus on iteration, rather than trying to jump straight to a complete final outcome.

How everything changed with Agile

The most common software development methods from 2000 onwards follow an “Agile” pattern, and are really an evolution of what came with RAD, rather than a wholescale revolution.

The Agile approach (a common example being Scrum) encourages us to break our monolithic development projects into much smaller bite sized chunks that act as stepping stones to a fully implemented system. We are also introduced to the concept of a Minimum Viable Product (MVP), which is the most restricted set of functionality with which a new system can be considered useful.

Agile processes encourage us to develop an MVP and then iterate over that, improving and adding features in a (potentially) continuous cycle. We typically define a small set of user stories, refine them, implement them, and then add more stories as each is signed off by the customer.

From early in the project, the customer is able to interact with the fledgling system and provide feedback which is able to improve current and future work. Dead ends and “bad ideas” are identified early before significant investment has been wasted.

Bad user experiences and patterns can be identified and rectified before they proliferate.

In an Agile world, we break our project into “sprints” (time-boxed efforts, often two weeks in duration). We commence each sprint with a planning meeting, in which the stakeholders agree on the stories that we will tackle. The choices are based on the size of the stories (usually measured in points, rather than hours), partner preference, and dependency on other stories.

We finish our sprints by presenting our work to the partner and looking back at what went well and not so well in the sprint, so we can improve our process for the next one.

Every sprint, the stakeholders are engaged and there are no surprises building up.

It is possible to be too agile (or be agile with the wrong things)

A problem with approaching systems in bite-sized chunks that the end-user can interact with and sign-off is that not all systems are well-suited to that split.

Important platform capabilities can require much longer than a single sprint to establish and don’t necessarily yield features that can be demonstrated to the team at the end of a sprint. Trying to force that fit can create bad outcomes in terms of performance and maintainability.

Sprint Zero at MadeCurious

MadeCurious generally performs a “Sprint Zero” exercise before the main development sprints commence. This sprint often has a smaller team than normal (on both our side and partner side) and is also often longer in duration than the standard two weeks.

The purpose of this sprint is to ensure adequate architectural thinking and design has been progressed before feature delivery. It exists to balance weekly “iterative progression” with “design rigour”, ensuring the necessary foundations are well understood.

We often make good progress on database design to ensure that we have a clean model and are not engaging in excessive re-design during the development process.

As MadeCurious’s platform has matured, some of the activities we might have previously put into a sprint zero have become matters of prior experience for the kinds of projects we typically tackle, and this means we can spend this time on elements that are specific to the current project.

This is, of course, an advantage of developing many projects on a common - and growing - platform. Each new project is able to gain from the lessons and work products of prior projects.

This early part of the project can also be used to perform technical spikes to investigate areas of technical uncertainty and refine choices, and estimates for future stories.

Development sprints

Our regular development sprints see the next tranche of stories developed and the last batch of stories user tested. As stories from the previous sprint go through acceptance testing, we expect to find defects (though hopefully not too many) and things that turned out to be not quite what the end user wanted.

For defects, we usually fix those in-sprint. For iterative changes to behaviour, those may require some agreement and re-design, and will likely be pushed to a later sprint, depending on the effort involved.

In the background, the BA and user teams will be plugging away eliciting and documenting the requirements for the next stories, with the goal to always be a couple of sprints ahead of development. This means that if a sprint rocks, there is already specified work available to pull in from the backlog.

Agile ceremonies (yeah, it’s just “meetings”)

These are the regular meetings that we engage in to ensure we remain on-track building the right thing and that we are operating with good knowledge of what our team-mates are working on.

Arguably the most important is the team standup, where the team quickly runs through their current tasks and any blockers they might be encountering (or useful learning points). Depending on the project, these might be every day or less frequently. It is important to keep them short, snappy and useful.

The sprint planning, refinement, and end of sprint retrospectives are all considered to be agile ceremonies.

Velocity

When we estimate our work, we (generally) don’t think of each piece of work in terms of the number of hours or days. We try to estimate it relative to the other pieces of work in the project by assigning “story points”. Of course, when estimating we are thinking “how long will this take me?”, but we are recording this using a point system, with a common agreement of roughly what we think a point maps to at the time.

Generally we start our projects with a common understanding that a working day is broadly equivalent to a point.

So why go through this charade of points not hours?

It really comes to the fact that humans are notoriously bad at estimating, especially if we haven’t done a specific task before. As the team starts to deliver on these story points, we get a real idea of how a story maps onto an amount of time.

This mapping is our velocity.

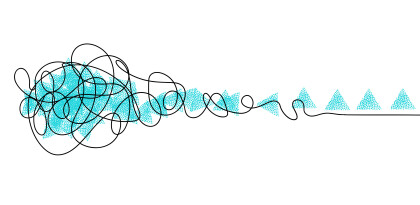

Usually our velocity follows a curve, starting slower, ramping up to a peak as the team gels together and builds efficiencies (based on picking up smarter ways of doing things) and then tends to tail off a bit at the end as we have to mop up issues that have had to roll over from earlier sprints.

We like to try and keep teams together between projects wherever possible as this really helps keep a strong velocity even across projects and partners.

Minimum viable or minimum happy

We try to not just establish what the Minimum Viable Product is, but also what we often refer to as “Minimum Happy Product” (MHP).

Whilst MVP is the absolute minimum feature set that will allow the partner to perform their business with the system, there is usually a superset of that functionality that delivers a much greater level of delight. MVP is an important fallback position to have, but it isn’t generally where we should be aiming to finish a project at.

Often small increments beyond the bare minimum can create enormous improvements to the user experience for a small financial outlay. Agile can get a bad reputation when it is used to replace end-of-life (but generally liked) happy place systems with modern MVPs that don’t make life easier for the end user.

For a system to be successful, your users really should be excited to switch to it.

Automated testing

In any modern agile development we are incrementally adding functionality. Sometimes we have to make changes that impact things we already built in order to account for design refinements. Throughout this process, we need to know if we break anything.

This makes automated testing crucial.

Generally, each new delivered feature shouldn’t be considered complete unless it has been supplied with an automated test. Such tests should be hooked into the Continuous Integration (CI) environment so that each new commit runs the tests and immediately highlights regressions.

Having worked on projects with both good and bad test coverage, I know that those where tests were de-scoped (usually for budgetary reasons) have a significantly higher maintenance cost and risk. Of course, if a system is only being developed as a stop-gap with a planned short lifespan, the testing regime will be different than if you expect it to be in service for many years.

Continuous communication, learning and improvement

Whatever specific agile method you choose to use, a software development process should ensure the right people have the right information at the right time to build the right thing.

It should facilitate early identification when it turns out we might be building the wrong thing and give us the tools to rapidly course-correct.

But above all, it’s probably mostly going to be a whole lot of common sense and it is probably going to change over time as you iterate on what worked well and what didn’t or bring in new ideas from fresh team members with different past experiences.