In the previous post in this series, we charted our journey through the realm of JavaScript frameworks and other options available to help build our redesigned website (with a strong focus on delivering value).

In this post, we’re going to take a deeper dive into the implementation of our selected approach: Silverstripe, Vanilla JavaScript, and the Module Pattern.

Opting out of React: filling in the gaps

While React and a headless CMS approach might be right for some projects, there will be occasions when the increased complexity is hard to justify or it simply isn’t the right fit for the project. We found ourselves in this situation when choosing an approach for redeveloping our company website.

This post aims to help fill in the gaps when implementing JavaScript without an opinionated framework or concrete set of best practices to guide you.

In our case, we were building a Silverstripe site but the same approach could be adapted to work with other server-rendered content management systems like Wordpress or static site generators like Hugo or Jekyll.

What is the module pattern?

At its core, the Module Pattern utilises closures to provide public and private encapsulation in objects. If that sounds complex, think of it like a vending machine: You have a public interface (buttons you press to get a drink) and a private inner mechanism that's hidden from view and manages the machine's operation. In JavaScript, closures allow functions to access outer function scopes, even after the outer function has returned. This is what we harness in the Module Pattern.

An immediately invoked function expression (IIFE) is often used to create a module. It's a function that runs as soon as it is defined and is the building block of our pattern.

Advantages of the Module Pattern

The Module Pattern brings several key benefits to the table:

- Scope Management: It keeps the global scope clear of unnecessary clutter, reducing the risk of variable collisions.

- Encapsulation: By hiding its variables and functions within a closure, it ensures that only the exposed public API is accessible from outside the module.

- Maintainability: Organised code is easier to maintain, debug, and extend.

Basic Implementation of the Module Pattern

Let's start with a basic implementation:

const myModule = (function() {

const privateVariable = 'Hello World'

const privateMethod = () => {

console.log(privateVariable)

}

return {

publicMethod: () => {

privateMethod()

}

}

})()

myModule.publicMethod() // Outputs: 'Hello World'

myModule.privateMethod() // Will throw an errorIn the above example, privateVariable and privateMethod are not accessible from outside myModule. Only publicMethod is exposed.

A Real-World Example: Creating a Modal

Now, let's apply the Module Pattern to create a modal component:

const modalModule = (function() {

const modal = document.getElementById('js-modal')

const closeBtn = document.getElementById('js-close-modal-btn')

const openModalBtns = document.querySelectorAll('.js-open-modal-btn')

const showModal = () => {

modal.style.display = 'block'

}

const hideModal = () => {

modal.style.display = 'none'

}

closeBtn.onclick = () => {

hideModal()

}

return {

init: () => {

openModalBtns.forEach((btn) => {

btn.addEventListener('click', showModal)

})

},

destroy: () => {

openModalBtns.forEach((btn) => {

btn.removeEventListener('click', showModal)

})

}

}

})()

// Initialization

window.onload = () => {

modalModule.init()

}This module keeps the modal's logic neatly packaged, exposing only the init method to start the modal's functionality and the destroy method to remove the event listeners.

Initialising and De-initialising Components

Proper initialisation and clean-up, often referred to as setup and teardown, are crucial for several reasons:

- Resource Management: initialisation and clean-up methods ensure that memory is allocated when it’s needed and released when that’s no longer the case. This prevents memory leaks, which can lead to performance issues and even application crashes.

- Performance: As mentioned above, without proper teardown, components may not be garbage collected, leading to decreased performance over time due to increased memory usage.

- Consistency: Proper setup ensures that each component starts from a known, consistent state. If the initialisation process is incomplete or incorrect, the component may behave unpredictably or cause errors.

- Event Management: Event listeners that aren't properly removed during teardown can cause unexpected behaviour, as they may continue to respond to events even after the relevant component is no longer in use.

- Data Integrity: Applications often interact with data sources, such as APIs or databases. Initialisation may involve setting up data bindings or state management. If these aren't set up or torn down correctly, it could result in inconsistent application state or data corruption.

- User Experience: Users expect applications to be responsive and efficient. Proper initialization and cleanup help ensure a smooth user experience by avoiding slow transitions, unresponsive UI components, and crashes.

Creating modules: tips and pitfalls

- Modules should be small and focused on a single responsibility. This should keep complexity down, increase interoperability, allow for easier reuse of functionality, and keep your code maintainable. If a module is becoming too large or complex, break it down into smaller parts.

- Avoid tightly coupling modules together. For instance, if you’re following the first point and need to refactor a large component into multiple modules, don’t hardcode shared values or functionality. Instead, use your modules’ public APIs to allow them to interact and pass required information between one another. This allows other modules to do the same in future if they require similar functionality.

- Be deliberate about what you make public. A good way to go about this is to keep a variable or function private until you need it to be public.

- With our templates being server-rendered, we wave goodbye to the reactive properties we’re used to with React and instead, embrace the technique of DOM manipulation (cue sobs). Not ideal but there are strategies we can employ to make this technique slightly less fragile - more on this below.

Adopting different patterns

The Module Pattern works really well for us and feels like a good fit given the component-based approach we take in our React projects. However, it’s worth noting that the pattern itself is not the key ingredient to a successful implementation of Vanilla JavaScript. The real value is in wrapping some rules and consistency around your code base, making it easier for developers to navigate and extend. Without these, a codebase tends to deteriorate over time (regardless of how clean the code is at the start of the project).

Integrating the Module Pattern into Silverstripe

Once you’ve got your head around the Module Pattern (or whichever pattern feels right for you), it’s time to set up your JavaScript file structure and build tools. As with selecting a pattern to follow, there is no right or wrong approach here. I’ll give some examples that work for us but there’s no reason this setup can’t be modified to work with your particular context and tools you’re familiar with.

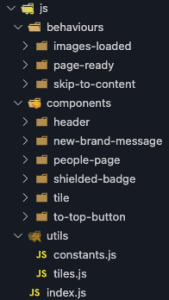

Setting up your file structure

Within our Silverstripe theme, we have a src directory for all our raw code (which our build tools optimise, minify and concatenate into a dist directory). Within our src directory we have two folders - js and styles. I won’t be covering our CSS setup in this series but our js folder looks like this:

Following an existing pattern from our React projects, the majority of our JavaScript files are named index.js and placed in a directory which assigns the module name (sub directories/modules follow this same pattern). There are arguments for and against this pattern so you just do you :)

We made the choice to organise our JavaScript into three distinct categories:

- Behaviours: site-wide functionality and modules not tied to a visual UI element (such as our PageReady function).

- Components: functionality to add interactivity to our UI components (with more complex components broken down into sub-modules and nested under their parent component module).

- Utils: here we keep a constants file (to have a single source of truth for shared information like responsive breakpoints etc) as well as individual files for any shared functionality that doesn’t belong to any one component. We can import these variables and functions into our modules as required.

Finally, we have an index.js file in the root directory to manage importing and initialising all our modules via our PageReady function.

Approaching DOM Manipulation

As mentioned above, we’re kind of stuck with DOM manipulation to add interactivity to our components following this approach. This is sad but also quite manageable for the most part.

When I was a baby dev, DOM manipulation with good ol’ jQuery was the default option. I created such monstrosities with this approach and my code inevitably wove itself into knots that were both too complex to unwind and incredibly brittle at the same time. If nothing else, that experience fostered some wisdom around how I approach DOM manipulation now.

Here are some tips to keep your code sane:

- Separate your concerns: Do not use the same class that you have used to apply styles to an element as a selector in your JavaScript. Tightly coupling your styles with JavaScript behaviour will usually lead to pain.

- Be explicit: Further to the first point, it’s a great idea to prefix any classes being used as a selector in your JavaScript with a ‘js-’ (or something similar). This makes it more clear to other developers that removing that class in the templates is likely to change some form of interactive behaviour currently being applied to the element.

- Don’t assume success: given how fragile DOM manipulation can be, it’s not uncommon for things to break. To avoid one error crippling all other functionality, wrap your code in try/catch blocks where it makes sense and make sure you’re checking queried elements exist before manipulating them (rather than just assuming they do). Even better - set up tests for critical functionality.

Configuring Laravel Mix as your build tool

As mentioned above, we have a src directory for all our raw code which gets minified and concatenated during our build step. To handle this (as well as compiling our Sass files and managing polyfills for older browsers), we use Laravel Mix. Laravel Mix is a wrapper for Webpack that lets us easily use the good bits without the cognitive overload of figuring out how Webpack actually works each time.

I won’t regurgitate the Laravel Mix documentation here but essentially you follow these steps to get set up:

- Install Laravel Mix using Yarn or NPM. We’re also using the laravel-mix-polyfill extension which will need to be installed alongside the main package.

- Create a file in the root of your project called webpack.mix.js and configure your build pipeline (more on this below).

- Include relevant script tags in your main Page.ss template (more on this below).

- Run the watch command locally to compile your assets as you make changes.

- Configure the production command to run automatically as part of your deploy process (or manage this step manually when you release to production if required).

Here’s some example config for our JavaScript from our Mix file:

const mix = require('laravel-mix')

require('laravel-mix-polyfill')

/*

* JAVASCRIPT

* - Compile all JS assets into app.js

* - Extract third-party libraries/Webpack manifest into separate file (see: https://laravel-mix.com/docs/6.0/extract)

* - Create source maps for compiled assets

*/

mix.js(`${themePath}src/js/index.js`, `${themePath}dist/app.js`).extract().sourceMaps()

/**

* Polyfill to support older browsers

* See https://browsersl.ist/#q=%3E0.3%25%2C+last+2+versions%2C+not+dead

*/

mix.polyfill({

enabled: true,

useBuiltIns: 'usage',

targets: '>0.3%, last 2 versions, not dead',

})Lets break that down:

- The js() command takes our raw index.js JavaScript file as the first argument, transpiles it (based on settings passed in via the cli as well as any other config in the mix file) and outputs the result in our dist directory (defined by the second argument).

- The extract() command takes care of code splitting so that we don’t need to bust the browser cache each time we change a minor detail in our custom JavaScript.

- The sourceMaps() command… Creates source maps! These help with debugging as they map the transpiled code back to the source files.

- The polyfill() command uses the laravel-mix-polyfill extension to automatically polyfill newer JavaScript features for older browsers, based on the targets you define. You can use the BroswerList tool to help define your specific requirements.

With your Mix file configuration complete, you should be able to include your compiled JavaScript files at the end of your body tag in your themes/theme-name/templates/Page.ss file like so:

<script src="$resourceURL('/themes/theme-name/dist/manifest.js')"></script>

<script src="$resourceURL('/themes/theme-name/dist/vendor.js')"></script>

<script src="$resourceURL('/themes/theme-name/dist/app.js')"></script>To test everything is working, you should be able to run `npx mix watch` locally and watch Laravel Mix recompile your files each time they change.

Documenting your setup

Documentation - yay! I know it’s not everybody’s idea of a good time but… Rather than waste all this effort to set up a well structured, maintainable codebase, lets make sure the developer experience is as frictionless as possible (which will help keep it this way).

Make sure your project readme answers the following questions for any newbies tasked with making updates to your code:

- How do I set the project up?

- How do I run the build step for both local development and production?

- What patterns do I need to follow?

- How is the code structured?

- Where should I add a new feature?

- What are the browser support requirements?

- How do I test changes?

- Where can I go to find additional help?

Next steps: adding page transitions and reviewing our approach

In the final post of this series, we’ll cover how to bring a bit of joy to the user experience by adding a page transition effect you might expect to see in a Single-page Application (SPA).

We’ll also do a bit of a retrospective on what went well and what still needs tweaking to find the optimal Vanilla JavaScript setup.